File this under “previously unsaid sentences in human history.”

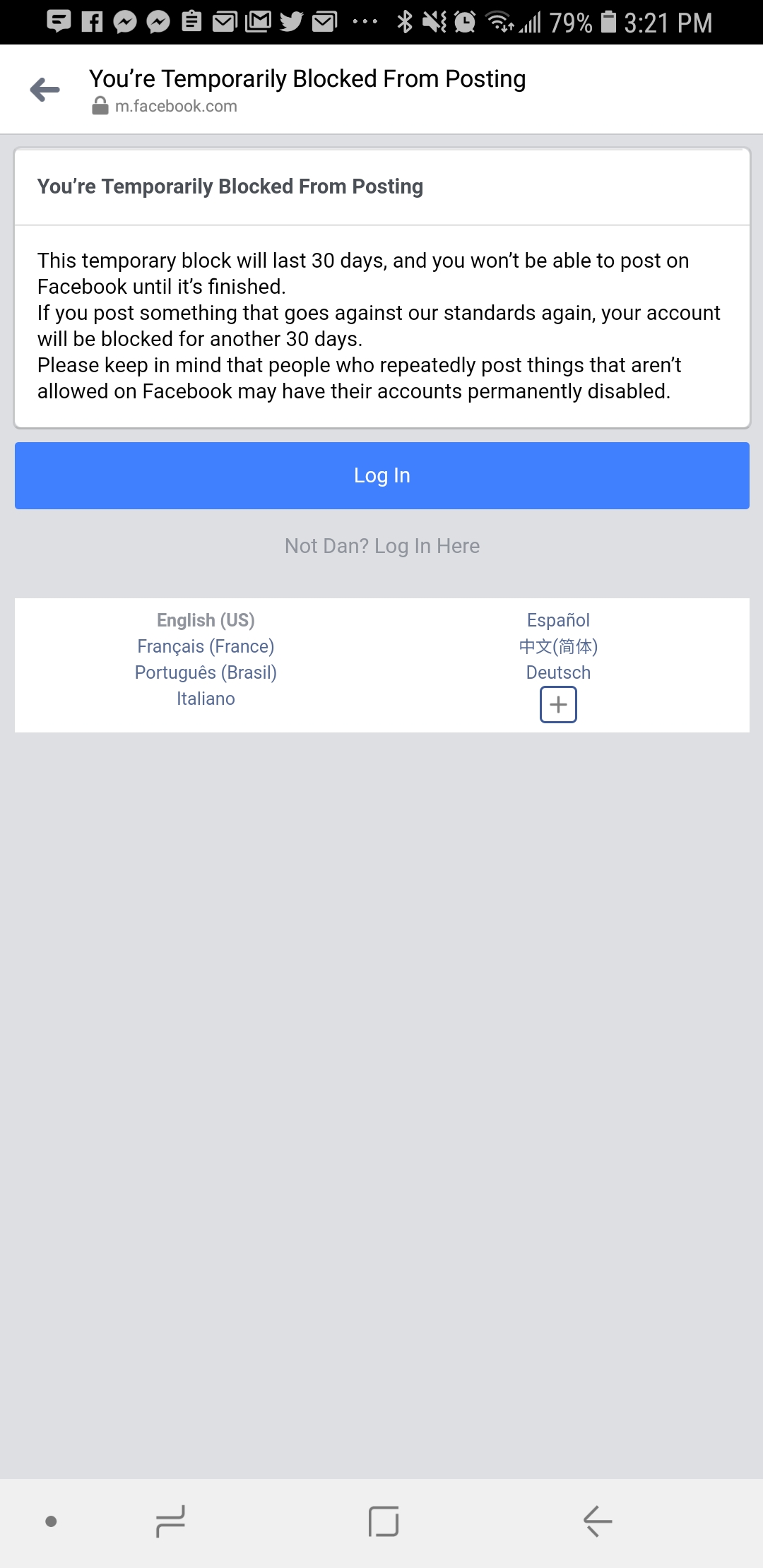

Facebook banned my personal account from posting for thirty days because I posted a meme that made fun of Nazis. Really.

I’ve been blocked from posting on my personal account more than a few times. It’s the risk you run when you enjoy shitposts – memes that make little or no sense and/or are so unfunny that they are funny.

Back when Kaepernick/Nike memes were popular, I posted a parody meme of Osama Bin Laden with the text “Believe in something. Even if it means sacrificing everything.”

You can view the meme itself here. Spicy, I know.

But it was using Nike’s own advertising slogan and applying it to a different person in a way that was critical of Nike and the hypocrisy of their ad campaign. Nike was pretending that they cared about doing what’s right, no matter the cost, while simultaneously shafting New York Knicks center Enes Kanter because he had the audacity to criticize the Turkish government.

The emotional toll is obvious, but Kanter’s sacrifice is evident elsewhere. He can’t leave North America and hasn’t been able to secure any endorsement deals. Nike, the same company that championed Colin Kaepernick’s controversial remonstration by putting him on the frontlines of a recent ad campaign, now refuses to sign Kanter. ‘I talked to Nike and they said, ‘We want to give Enes a contract. We’re watching him. But if we give him a contract they will shut down every store in Turkey, so we cannot give him a contract,’ he says. (Bleacher Report)

And there’s also the whole “sweat shop” thing, too.

Another example that comes to mind was back in 2017 when I posted this meme, inspired by a real person who lives in Flint, Michigan, which was also removed by Facebook. I was in Facebook jail for a month.

¯\_(ツ)_/¯

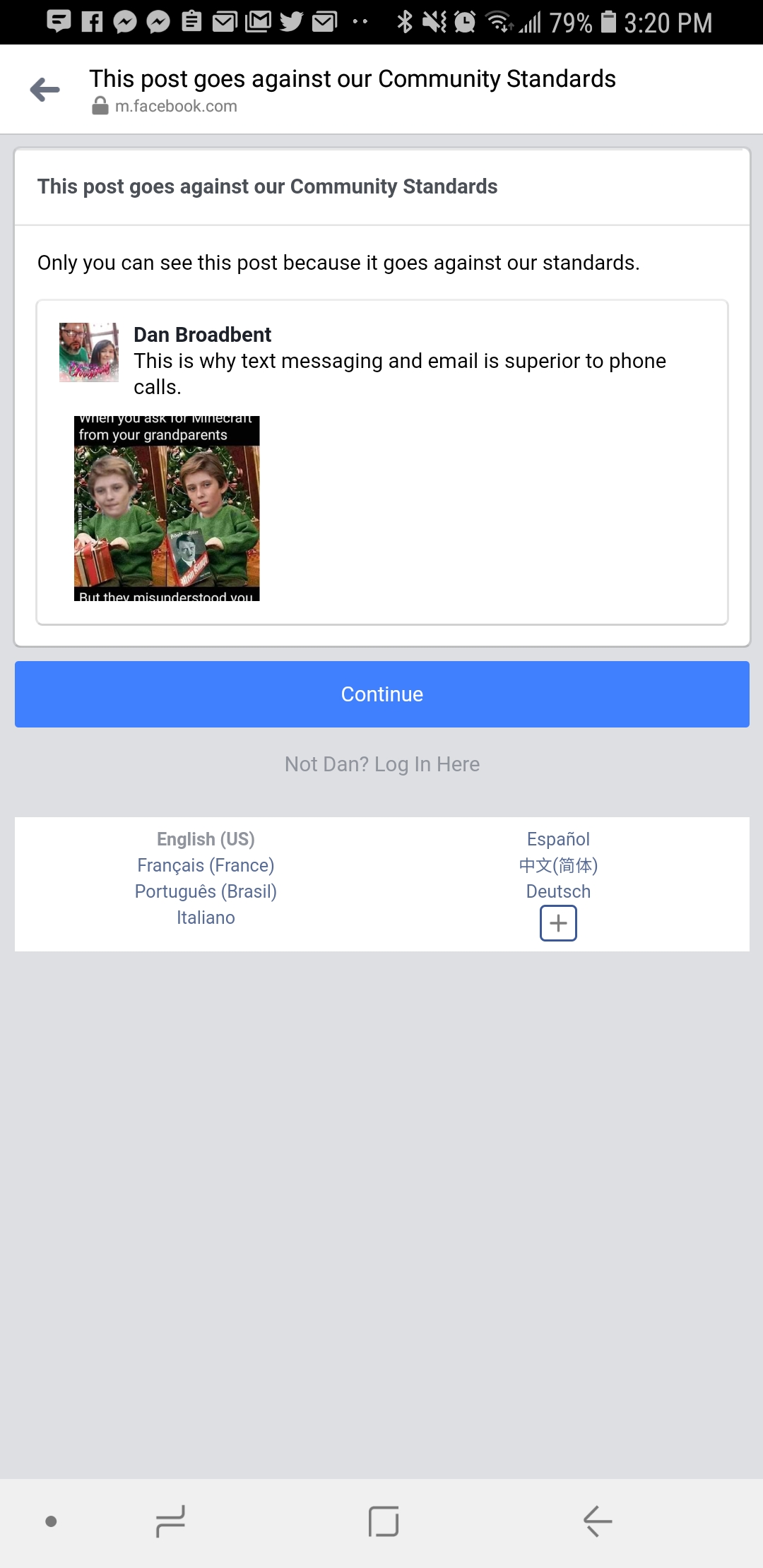

My most recent, and current stint in the Facebook slammer came from this holiday-themed meme:

Note: If you’re a fan of my pages, you haven’t noticed any change in the amount of posts going out since my personal account got blocked on December 23rd. That’s because there are multiple people who I’ve given posting permission to on the pages, and I use Buffer, a tool that makes posting content on social media so much easier, to post to Facebook/Twitter/Instagram. To use Buffer, you have to link a personal Facebook account to it, so I simply had one of my mods link their account to it, and anyone who has permission inside the Buffer app itself can still post to the pages and Facebook treats it as that person posting to the public page, rather than having to use the Facebook app itself. This does not go against Facebook’s TOS, and is not circumventing the block Facebook placed on my personal account.

Facebook has suspended and even deleted pages of mine before, most notably (and what about gave me a heart attack at the time), was when Facebook unpublished the A Science Enthusiast page on Facebook and threatened to delete it completely if I lost the appeal in April 2017.

Seems vague and not correct. I’m always careful to attack ideas, rather than people. It might have to do with #NuggetGate? Not sure. pic.twitter.com/DLUD2E95SR

— Dan Broadbent 🚀 (@aSciEnthusiast) April 12, 2017

Facebook never told me what the issue was, but I can only assume it had to do with a post about chicken nuggets – which I referred to as #NuggetGate – that Facebook somehow claimed was bullying. I won the appeal, but it was a stressful 48 hours while the flagship aSE page was in Facebook purgatory.

I survived #NuggetGate and got my page re-published, much to the chagrin of many. 😀 pic.twitter.com/5SvOljHDFX

— Dan Broadbent 🚀 (@aSciEnthusiast) April 14, 2017

Facebook & Nazis

I’m not saying that Facebook supports Nazis or is defending Nazis. Because I don’t think they are. What I am saying is that Facebook needs to do better at moderating content on their platform and provide some kind of transparency and appeal option for cases like this.

The meme I’m currently banned for – a joke about Mein Kampf – is a bit spicy, but is not an endorsement of Nazi ideology in any way, shape, or form. Parody, such as the meme I posted, takes away some level of power from those who are either in a position of authority or appear to be in a position of authority.

I was widely criticized by fans of the page a couple years ago after I said that punching Nazis is a bad idea. I don’t regret anything I said, and still believe this is true. (Of course this is only speaking in reference to philosophical discourse – not actual acts of physical violence.)

You shouldn’t use violence to combat bad ideology. If you truly think your ideology is superior, violence isn’t necessary.

As Moises Velasquez-Manoff wrote in his New York Times opinion piece How to Make Fun of Nazis:

Those I spoke with appreciated the sentiment of the antifa, or anti-fascist, demonstrators who showed up in Charlottesville, members of an anti-racist group with militant and anarchist roots who are willing to fight people they consider fascists. “I would want to punch a Nazi in the nose, too,” Maria Stephan, a program director at the United States Institute of Peace, told me. “But there’s a difference between a therapeutic and strategic response.”

The problem, she said, is that violence is simply bad strategy.

Violence directed at white nationalists only fuels their narrative of victimhood — of a hounded, soon-to-be-minority who can’t exercise their rights to free speech without getting pummeled. It also probably helps them recruit. And more broadly, if violence against minorities is what you find repugnant in neo-Nazi rhetoric, then “you are using the very force you’re trying to overcome,” Michael Nagler, the founder of the Peace and Conflict Studies program at the University of California, Berkeley, told me.

Ridiculing those in power or ridiculing those who believe in completely atrocious ideologies takes some of their power and mystique away. It makes them appear less of a threat, less of an authority figure, less intimidating, and hopefully gives would-be fans a moment of pause to contemplate their beliefs.

Back to Facebook

As I said earlier, I have no doubt that Facebook’s position on Nazis is that Nazis are bad. I always try to assume the best of people until proven otherwise, and I haven’t seen sufficient evidence that leads me to believe Facebook is pro-Nazi. Even if leadership at Facebook was pro-Nazi, it wouldn’t make any business sense for them at all. So there’s that, too.

This is a problem that no company has ever had to deal with before. The amount of data Facebook deals with is absolutely staggering.

Facebook has 2.27 billion monthly users, 1.49 billion of which are active on any given day. Five new Facebook profiles are created every second of every day. There are 300 million photos uploaded to Facebook every single day. Each and every minute of the day, over half a million comments are posted and 293,000 statuses are updated. (Source)

For more insight into Facebook’s current moderation process, I highly encourage you to listen to a recent Radiolab episode titled Post No Evil. In the episode, they talk to people who have worked for Facebook and who have worked at Facebook in the past. It’s a very nuanced and fair look into the daunting task Facebook is faced with. The bottom line is that the moderators are often overseas, may not have the pop-cultural awareness someone based in the US would have, are incredibly underpaid for their work, on average have under two seconds to review each post that gets reported, and often will allow their own personal biases to influence their moderation decision.

My friend Seth Andrews, who does The Thinking Atheist podcast, also did an episode about Facebook’s moderation policies.

It is unrealistic to expect Facebook to be perfect. But I would prefer that they err on the side of caution rather than imposing severe punishments for things that are ultimately nothingburgers.

I understand that not everyone would find anti-Nazi memes amusing, and they’d rather not see them come up in their feed when one of their friends share them. But Facebook has tools they’ve implemented to help improve the user’s experience. They put covers on images, videos, and cover images for articles that alert the user to content that they potentially may find offensive.

But even then, it’s not consistent. It wasn’t hard for me to find a video of a person dying on Facebook. This video, posted nearly two years ago, shows someone’s death, and does not have a content warning cover on it at all. There are many more videos like this that show people dying in truly horrifying ways.

But like I said, I don’t expect perfection from Facebook. That’s unrealistic and will never happen. But I do expect Facebook to do better, and to be more transparent.